I’m hopeful that one day we’ll look back at this moment and laugh a little sheepishly and with relief that we avoided turning over our brains to generative AI. Some folks may feel a little silly that they were so tempted, but us early AI-haters won’t tease you about it. We’ll ignore it with kindness, like we do our lifelong friends’ early-20s boyfriends and regrettable tattoo choices.

If you’re a student wondering why I–like many of your high school teachers and professors–really, really, really want you to stay away from generative (more accurately termed regurgitative because it coughs up what others have already said rather than creating anything) AI, here are some reasons why:

You are smarter than AI on topics you know a lot about–and not smart enough to realize when AI is fooling you. I have tested several AI products over the years, and anytime I ask any of them a complex question that I know the answer to, they get it wrong. Not so terribly wrong that if you know only a little about the topic you would be tipped off but wrong enough that an expert on the topic (like your teachers!) will see that you didn’t bother doing your research. Have you ever watched a fictional TV show about something you know a lot about–and you see how very little the writers know about it? Ask a police officer about a cop show or a doctor about a medical drama. Ask a teacher about Abbott Elementary or Saved by the Bell or Head of the Class or any other show set in a school and, even if they really enjoy the show, they’ll tell you that the actual job is nothing like what you see on TV. You’d embarrass yourself if you described hospitals as if they were really like Grey Sloan Memorial or police officers act if they were the characters in Mark Wahlberg movies. When you share information you derived from AI, you sound naive and uninformed.

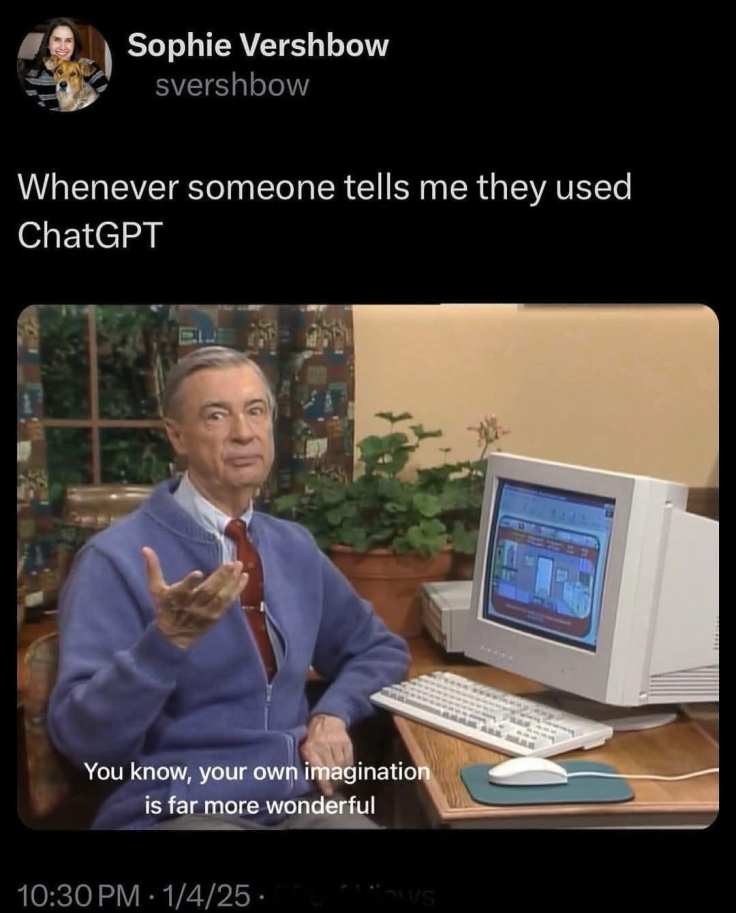

You do yourself a cognitive disservice. If you’re in your late teens through early 20s, you are in amazing time for brain development! Just like it’s easier to put on muscle when you’re young, whereas when you are old you have to fight to keep the muscle mass you do have, right now your brain is ready to do some impressive work to set you up for long-term cognitive health. Education seems to actually change the structure of our brain for the better. When you outsource thinking, even about small tasks, you miss opportunities to strengthen your brain–and even if you change course later and start studying, you will have missed moments when your work to improve your brain would have had the most impact. Like any other physical skill–from playing the guitar to drawing to throwing a baseball or driving a car–, if you don’t practice thinking, you won’t become good at it.

Learning is a pleasure. Learning might seem like a drag when you aren’t getting to decide what to learn or how much time or effort to put into learning it, but as you progress in college, you get to focus more on your own interests (though it’s good to be open to learning things you won’t think you’ll like, too!). When you skip the readings, don’t write the assignments, and don’t create art, you miss a chance to experience a challenge and the feelings of relief and pride that come from meeting it.

One definition of addiction is that it is the narrowing of what gives us pleasure. Learning is the opposite of that, because it expands what gives us pleasure. It introduces us to new sources of delight, yes, but the very act of learning–regardless of what we are learning–is pleasurable. If you ever get a chance to see a baby or a young child learn something for the first time (a first step, how to put on their shoes, how to ride a bike), you’ll see delight. When you are in school, your main task is to learn–something that you’ll have to fight to get to do in other stages of adulthood.

AI requires plagiarism. Generative AI steals from authors and artists. It takes their work and trains on it without paying them. And it often reproduces their work without citation. AI itself steals, and if you pay for it, that’s like you buying fenced goods fully knowing that they are stolen. AI companies wouldn’t exist if they had to pay for the content it steals from artists and authors to make its product, as its leaders have whined to regulators.

AI data centers hurt people and communities, and AI companies undermine local democracy to prevent communities from saying no to their pollution. The environmental impact of water misuse falls heavier on communities of color and poor people. This is environmental racism and classism. Data centers are often built in areas where local people don’t have much power to prevent their arrival. If tech companies cared to solve these issues, they would do so before imposing risks on communities that can’t easily fight back. Instead, in keeping with their “move fast and break things” mindset, tech leaders overwhelm local democracies to ensure that everyday people have no say in what their lives in their own communities will look like.

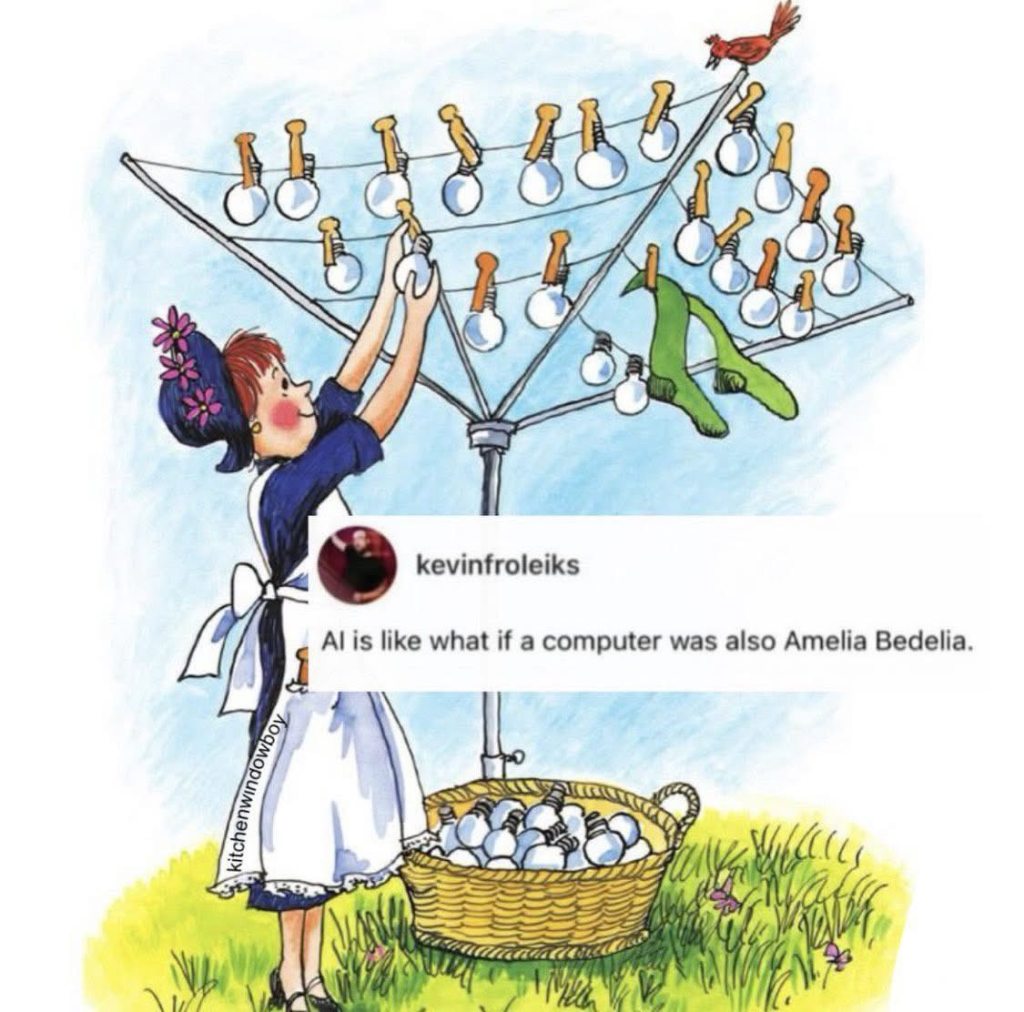

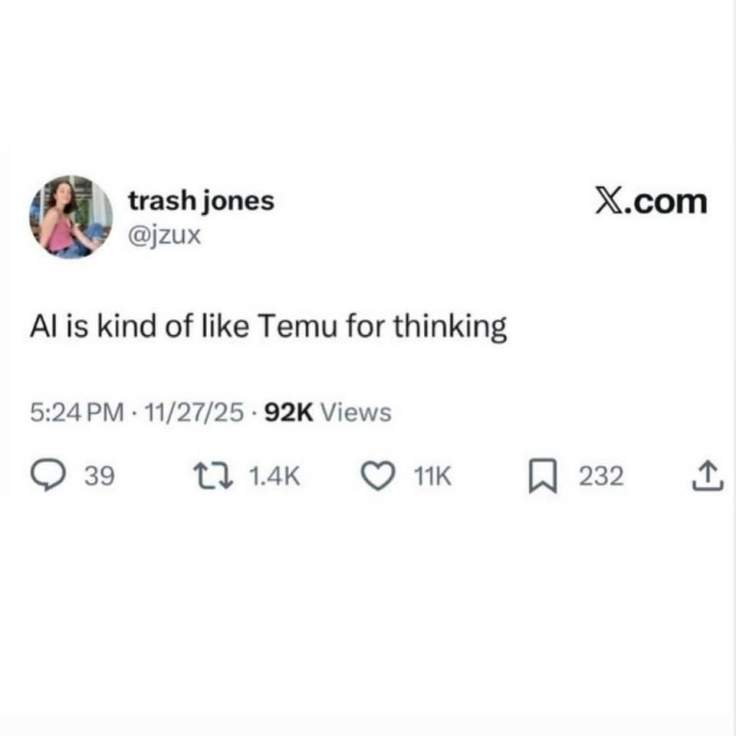

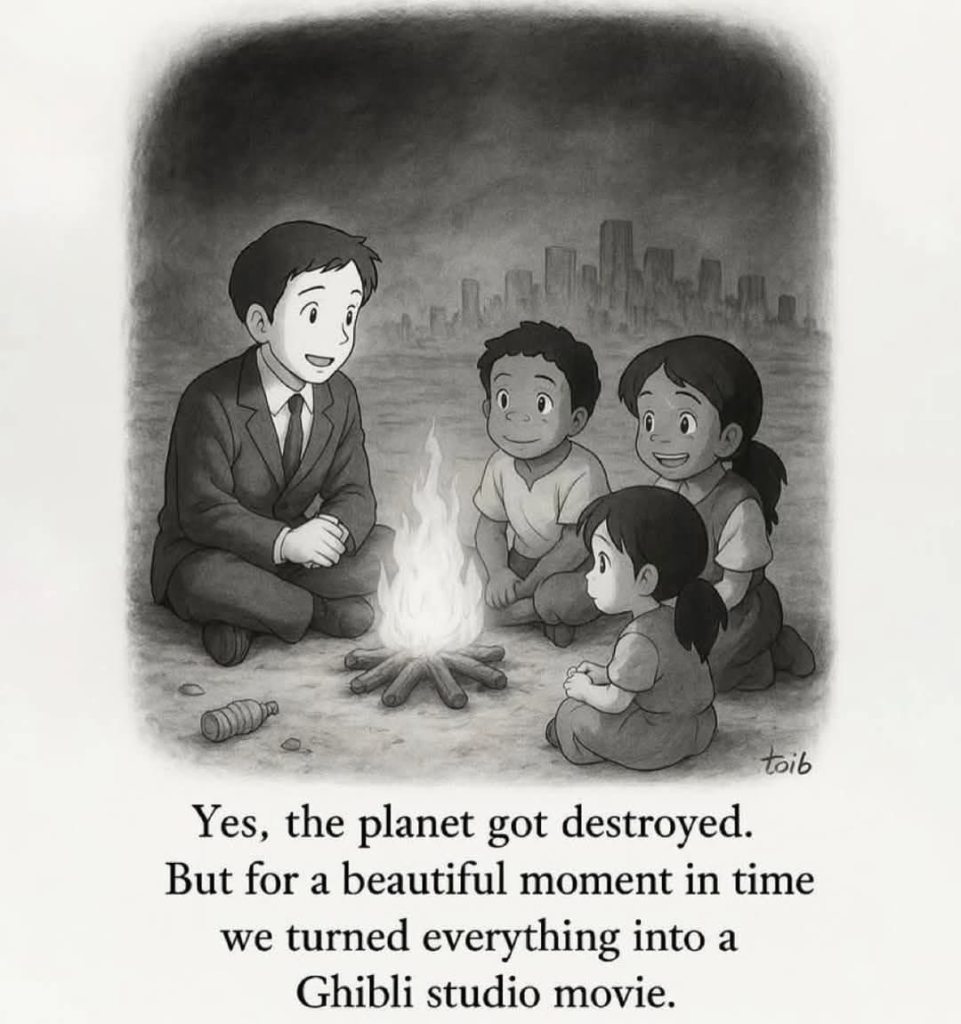

AI elevates the status quo and, inherently, can produce nothing innovative. Generative AI is merely a prediction tool, guessing at the next word based on what’s already been published. It can NEVER say something innovative, because it just picks the next word based on what already exists—unless it’s just hallucinating. It is, by definition, either making shit up or repeating what is already known. And that means that it’s not even assessing whether the information is accurate. It repeats content that is already popular, even if it’s not accurate—which means it elevates content that is common even if it’s also wrong. It just makes the loudest voices louder. When you use generative AI, you miss a chance to learn from real innovators and to uplift their voices.

I want my students to do more than repeat the status quo, and every employer is looking to hire people who are innovative. Repeating what has already been done (which is the only thing the generative AI can do) isn’t how you get a good job or make a meaningful contribution to your field or our world. AI prevents you from innovating and it keeps you disconnected from what is cutting edge in your field.

AI prevents your teachers from teaching you. To teach you well, your teachers need to know what you actually know so they can teach you want you don’t know. When a student in my class uses AI, they have contaminated that data that I need to analyze in order for me to teach them and their classmates; this is unethical treatment of their peers because it shifts the class away from what others need to learn. For example: If 90% of the class uses generative AI and, in doing so, gives me the impression that they know something they don’t, I may move on without teaching the material they need to learn—which is a disservice to the other students who want to actually learn it.

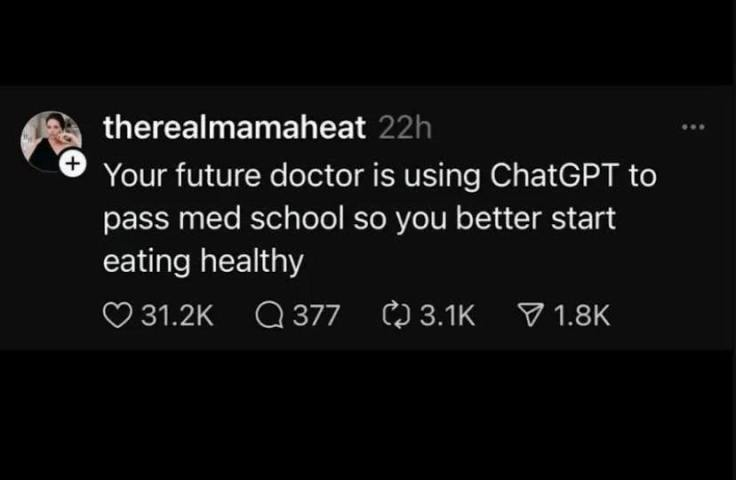

AI lies to you–and your teachers–about your competency. When you submit AI-generated “work,” you may trick yourself into thinking that you learned. Later, though, you’ll discover that you don’t have that skill after all–and the stakes might be much higher than merely a grade on a paper. It is also academically dishonest because it messes up our success rates on external exams; if we think you’re ready to take an external exam, like an FAA exam (for pilots) or a N-CLEX (for nursing) or the bar exam (for law school) based on your AI-enhanced performance in class but then you bomb it because you’ve given us the wrong impression, our programs lose credibility, which is a disservice to your classmates, taxpayers and donors who support these programs, and alumni who worked to graduate from colleges with solid reputations. Schools are rightly proud of their graduates’ standardized exam scores, colleges brag about the percent of students who pass such exams on their first attempt in their recruiting, and potential students weigh this information as they choose where to spend their tuition money. You tarnish your school’s reputation when you don’t let us support you in passing those exams because you don’t let us know that you need help. You cheapen the value of your degree for our alumni–and for your future self.

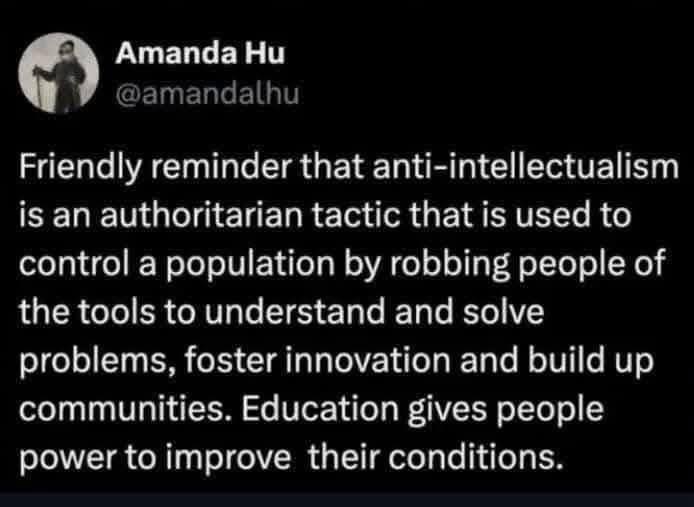

AI takes you out of the conversation, hinders you from maturing into an adult, undermines your sense of citizenship, and destroys democracy. Education at every level isn’t just about teaching you facts–it’s about teaching you how to join our society’s conversation, about learning to speak confidently when you have something informed to say and humbly learning, which requires listening and reading. Education is about taking up the work of citizenship in a democracy, which is, after all the work of people governing themselves. When you rely on AI to “read,” “summarize,” “organize,” and draw conclusions for you, you are failing to learn how to take your place. The long-term social harm is profound, and it goes beyond a future in which professionals who must make high-stakes decisions (ER doctors, hostage negotiators, pilots, firefighters, and others who must make split-second decisions based on knowledge and experience) being incapable of their jobs. On an even bigger scale, it means that we don’t, as a society, take ourselves seriously enough to learn from each other, to dialogue and deliberate. AI is anti-democratic–and explicitly fascist–, and societies that cave to it won’t find themselves being ruled by robots but by oligarchs.

AI prevents me from getting to know you–and our relationship might be the most valuable thing you get from our time together in class. When you allow AI to do your work, I don’t get to know YOU. College is about networking, not just grades. You want letters of recommendation and connections. Employers come to us searching for new employees—you want me to be able to say, “yes, he’s an outstanding [programmer, social worker, teacher, etc.] but that’s true of all of our students. This one is special because of XYZ, and here are examples of his work that prove it.” You want me to nominate you for scholarships and internships, but I need to know YOUR abilities, not your ability to prompt AI, to do that well. You want me to guide you to opportunities that are a good fit for you so you can be successful. When you lie to me about what you can do, I can’t help you find a good fit in the world outside of college. And if you don’t show your teachers what YOU can do, we will stop trying to connect you to a successful future because we aren’t going to risk our reputations for a student we can’t be sure we actually know. I’m not going to embarrass myself recommending you for something I’m not sure you can do, but when you submit AI-generated work, I can no longer discern what you might actually be qualified for—so you lose all kinds of opportunities; in fact, I’ll have to recommend against you since you are not just an unknown entity but one that refuses to be known and is thus uncoachable. You know what employers hate in a new employee? Someone who hides their mistakes, avoids critique, and doesn’t grow from feedback.

AI trains you to avoid thinking. But the world needs thinkers! The fact is, the world will always require thinkers–and if you aren’t one of them, then someone who doesn’t have your best interests in mind will be thinking on your behalf.

In short, you are in school to learn, and your teachers and professors can’t help you learn if we can’t see what you don’t know. And then we can’t accurately assess you, so we can’t help you in the longer term. We don’t want to turn out graduates who are poorly prepared for their careers and unable to innovate. We want you to be successful, and even though, when a paper is due in an hour and you haven’t written it yet, you might feel like generative AI will enable that, everything about generative AI actually undermines your success.

![A response to the question "Why does being pro-AI feel like a rightwing stance now?": "because it's data centers are built in low income areas, polluting drinkable water, spiking utility bills, so environmental classicism {sic] & racism ANd on top of it because tis made by technofascists that wanna kill art and to turn your brain into sludge](https://anygoodthing.com/wp-content/uploads/2026/03/ai-is-rightwing.webp)

Most of your comments are well thought out. However I have to disagree with this one: “AI elevates the status quo and, inherently, can produce nothing innovative.” AIs are producing all types of innovations. A quick search found these as just an example: Protein Structure & Drug Discovery: AI, specifically models like DeepMind’s AlphaFold, solved the “protein-folding problem,” a challenge that baffled scientists for 50 years. AI is now generating novel drug candidates for human trials, such as those developed by Insilico Medicine. Accelerated Material Discovery: Generative AI models are identifying new materials and engineering solutions, such as reflective paints, much faster than traditional research methods. Autonomous Chip Design: AI has been applied to real production-scale chip design problems, creating efficient layouts that would take humans much longer to engineer. Generative AI in Creativity: Tools like DALL-E 3, Midjourney, and Stable Diffusion can create highly realistic, detailed images from text prompts, while Runway Gen-2 can generate videos from text.

LikeLike

I’m happy that AI is able to support and advance medical research.

I also don’t think these are the kinds of projects my undergrads are aiming for. But perhaps I should have been gentler.

LikeLike